The Road to Software 2.0 or Data‑Centric AI

This article gives a brief, biased overview of our road to data-centric AI (AKA Software 2.0). The hope is to provide entry points for people interested in this area, which has been scattered in nooks and crannies of the overall AI picture—even while it drives some of our favorite products, advancements, and benchmark improvements. Our plan is to collect pointers to these resources on GitHub, write a few more articles about exciting directions, and engage with folks who are excited about it.

Maybe you?

Starting in about 2016, researchers from our lab went around giving talks about an intentionally crazy idea: ML models—long the darlings of researchers and practitioners—were no longer the stars of the show. In fact, models were becoming commodities. Quelle Horreur! Jumping a bit ahead, what did we claim would drive progress? The data (in particular, the training data).

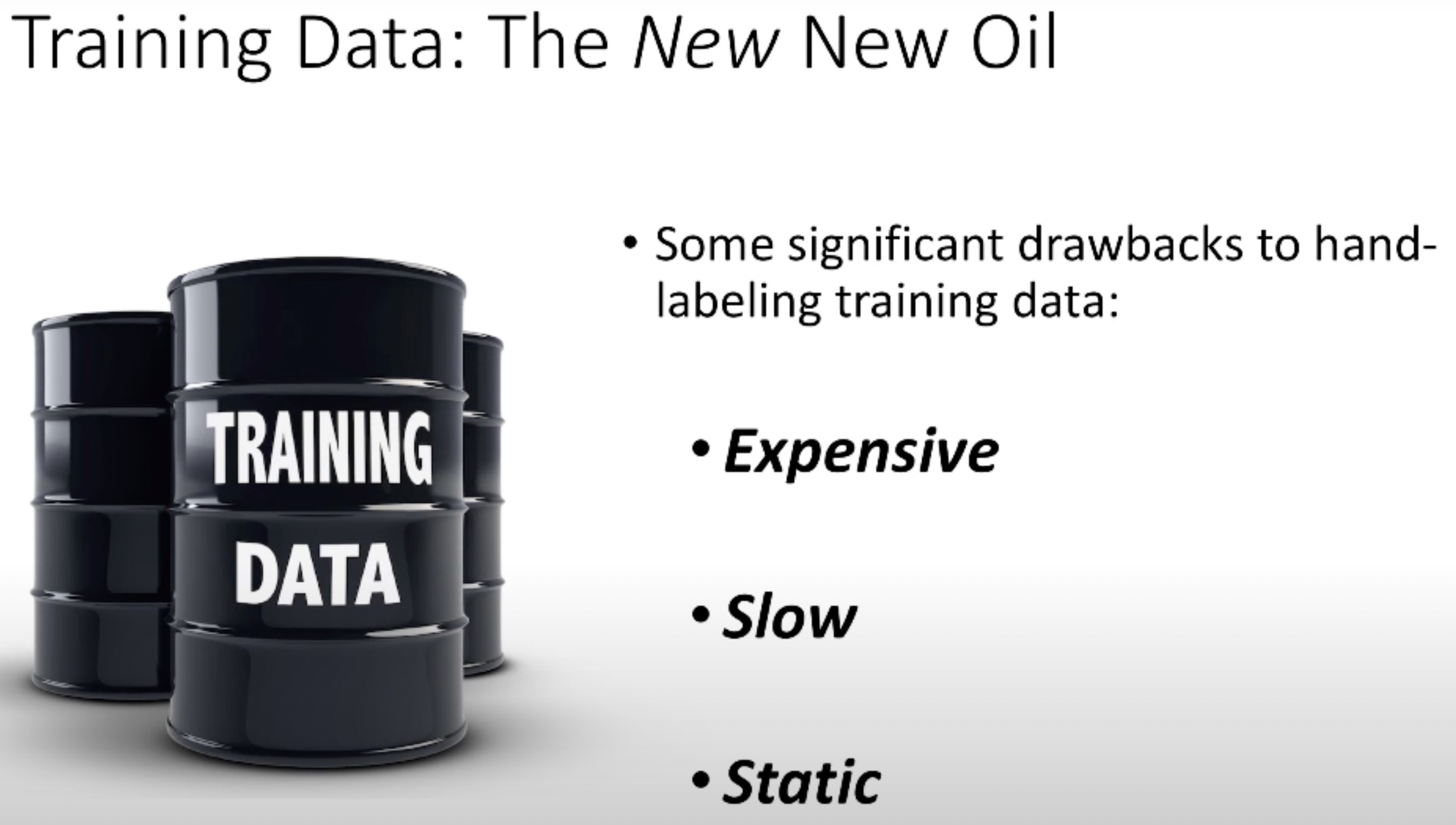

In a series of papers and talks, we had taglines like “AI is driven by data—not code” or worse (but our personal favorite) ”Training data is the new new oil”. What does that even mean? Moving ahead with our oil baron lifestyle, we started building systems championed by little octopuses wearing snorkels (octopuses clearly need help breathing underwater). Neither marketing nor marine biology are lab strong suits. Eventually, we turned to others and called this “Software 2.0” (inspired by Karpathy’s post). After the octopus got venture funding, professionals started calling it data-centric AI. Recently Andrew Ng found this to be a not-totally-embarrassing name and gave a great talk about his perspective on this direction. Thanks, Andrew!

Our view that models were becoming a commodity was heretical for a few reasons.

First, people often think of data as a static thing, and they have a point. Data literally means “that which is given”. (Hat tip to Ben Recht! ) For most ML people, they download a PyTorch dataloader, and that’s where ML begins: losses down, wheels up, and the data was a mere accessory.

But to an engineer in the wild, the training data was never “that which is given”. It was the result of a process-usually a dirty, messy process not worth bragging about.

Still, there was hope. This was a process and therefore could be engineered and programmed. In applications, we took time to clean and merge data. We engineered it. We began to talk about how AI and ML systems were driven by this data, how they were programmed by this data. This led to understandably (obtuse) names like “data programming”.

Second, this pitch was not only heretical—but annoying to our friends in ML (and even to us). Channeling the great Josh Wills, this pitch was bound to be hated by ML researchers because “we were eating their dessert”. Many ML researchers (ourselves included) love to write models—it is dessert. If you haven’t had the joy of writing a PyTorch model, you’re missing out! Jax is pretty awesome too. When PyTorch came out, it was rumored to improve your skin and your eyesight.

Unfortunately, we were telling people to put on galoshes, jump into the sewer that is your data, and splash around. Not an easy sales pitch for researchers used to life in beautiful PyTorch land.

We started to recognize that new-model-itis is a real disease. With some friends at Apple, we realized that teams would often spend time writing new models instead of understanding their problem—and its expression in data—more deeply. Writing a new model was a beautiful refuge to hide from the mess of understanding the real problems. We weren’t the only ones thinking this way, lots of no-code AI folks like Ludwig, H2O, DataRobot were too. We began to argue that this aversion to data didn’t really lead to a great use of time.

To make matters worse, 2016-2017 was a thrilling time to be in ML—so our view was even more unpleasant than even the sewer analogy may make it seem (if that’s possible). Each week a new model came out, and each week, it felt like if we could just run backprop a little bit longer, we could crack anything! It was an awesome feeling, and we all spent a lot of time watching those losses go down and waiting for the wheels to go up. Honestly, those machines produced demos that we couldn’t dream of a decade earlier.

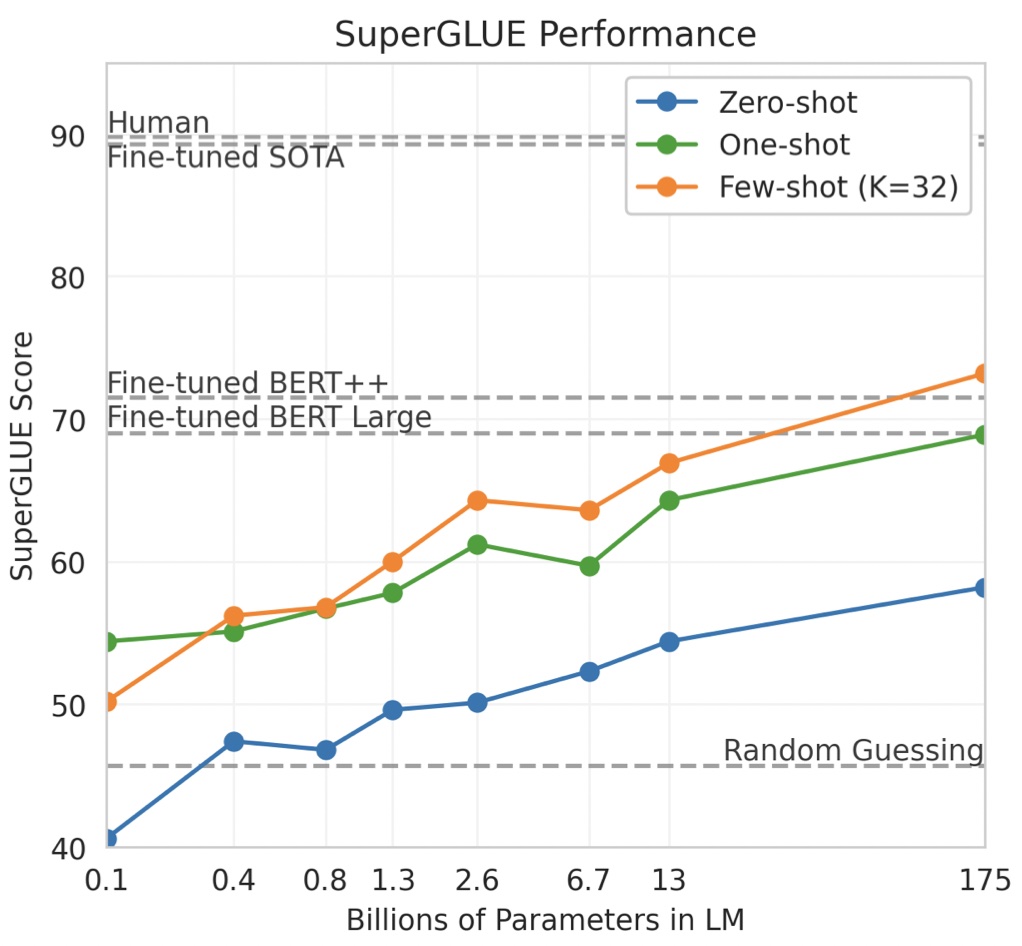

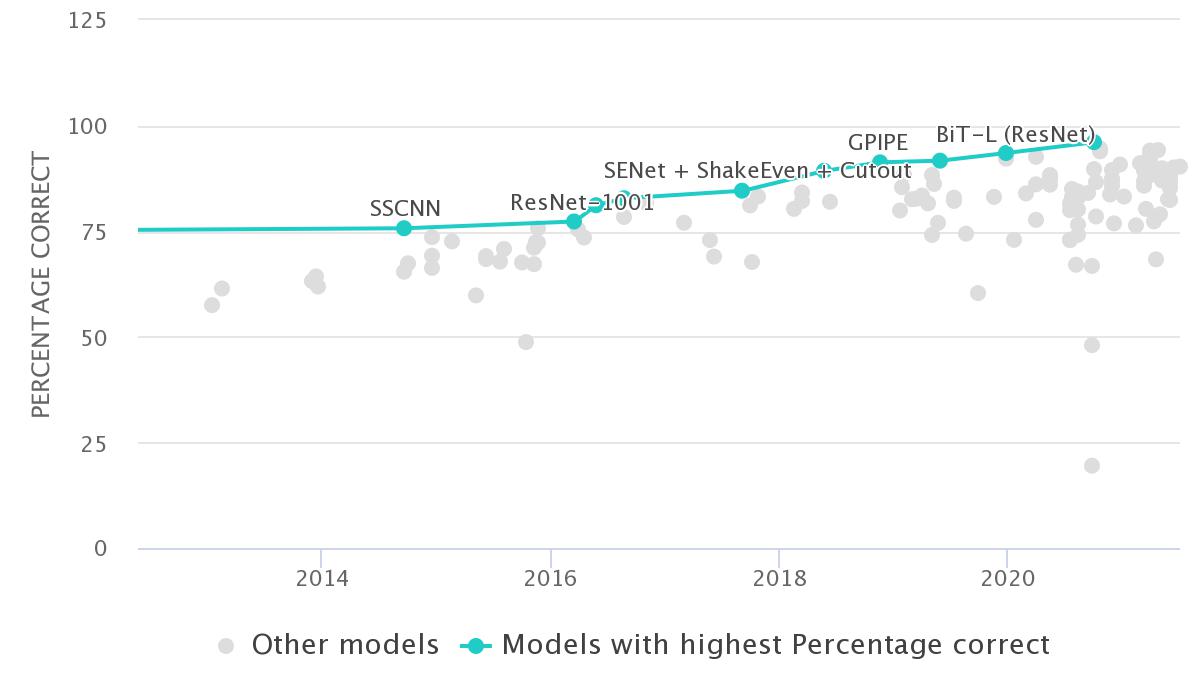

Despite this excitement, it was clear to us that success or failure to a level usable in applications we cared about-in medicine, at large technology companies or even pushing the limits on benchmarks—wasn’t really tied to models per se. That is, the advances were impressive, but they were hitting diminishing returns. You can see this in benchmarks, where most of the progress after 2017 is fueled by new advances in augmentations, weak supervision, and other issues of how you feed machines data. In round numbers, ten points of accuracy were due to those augmentations—while model improvements were squeaking out a few tenths of points of accuracy.

We were pretty confident that some large funded lab would keep machining away the last bits until it was usable by everyone, and then even the weights themselves would be put on line in model hubs like those now provided by the amazing people at HuggingFace (who, by the way, are awesome, thank you! Check out BigScience). Models were no longer the main obstacle.

This blog post is an incomplete, biased retrospective of this road (potentially as a prelude to writing something serious, but probably not). I’ll close with two thoughts:

- There is a data-centric research agenda inside AI. It’s intellectually deep, and it has been lurking at the core of AI progress for a while. Perhaps by calling it out we can make even more progress on a viewpoint we love!

- We’d love to provide entry points for folks interested in this area. We get invitations about how to “do software 2.0”. These results are scattered in a number of different research papers. We’d enjoy writing a survey (if anyone is interested!). We’ve opted to be biased about what influenced us the most to try to present a coherent story here. Necessarily, this means we’re leaving out amazing work. Apologies, please send us notes and corrections. On our end, we’ll do our best to build this community up on GitHub, with a collage of exciting related papers and lines of work.

) For most ML people, they download a PyTorch dataloader, and that’s where ML begins: losses down, wheels up, and the data was a mere accessory.

) For most ML people, they download a PyTorch dataloader, and that’s where ML begins: losses down, wheels up, and the data was a mere accessory.